Expressive Interfaces

Dr Charles Martin

Announcements

- User Research marks and feedback out later this week (goal is Wednesday)

- Gitlab Template now available for final project

- Final project due: 2025-10-27 23:59 AEST

- Remember: main deliverable in the assignment is your presentation

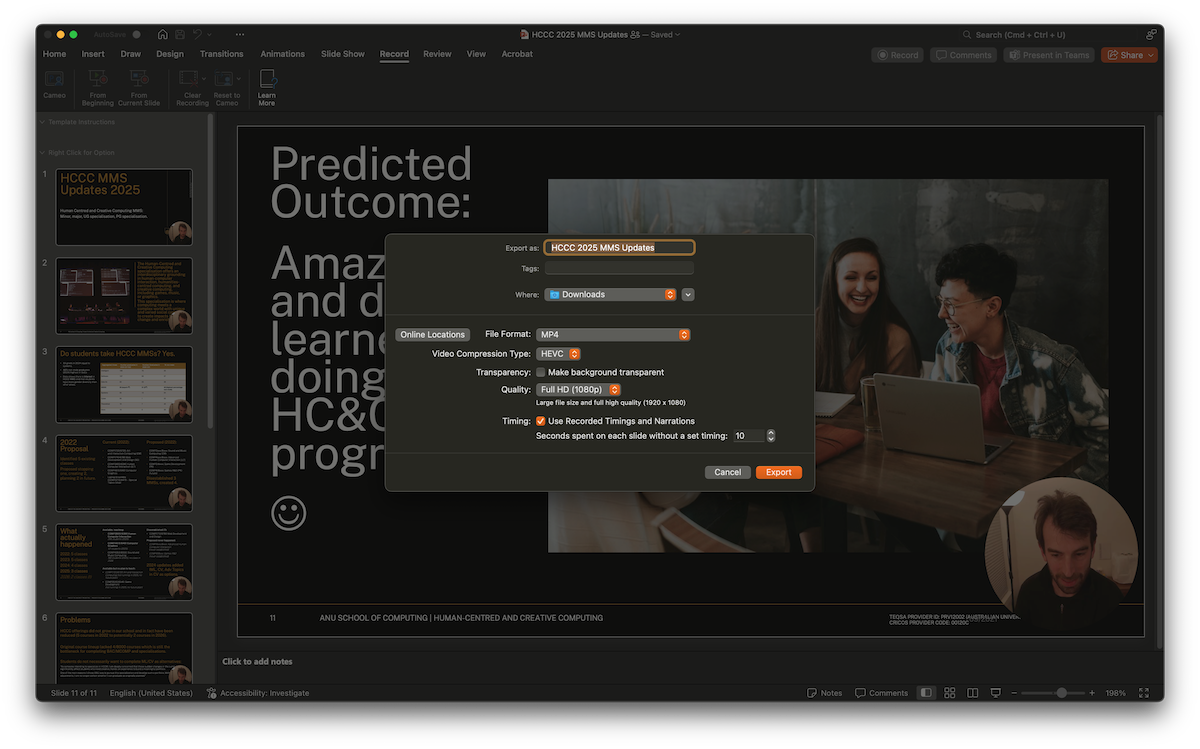

video

project-presentation.mp4 - Demo at end of lecture on recording a video in Powerpoint.

Plan for the class

- conceptualising expressive interactions

- drawing interactions

- music interactions

- dance interactions

- installed interactions

- playful interactions

- human-AI creative interactions

Conceptualising Expressive Interactions

why? expressive, artistic experiences can be drivers for HCI.

Supporting Creativity

Movement towards studying tools to support creativity.

there is a move from routine work and productive concerns to human and creative ones.” (Edmonds, 2018)

Expression and creativity let us get inside the process of an interaction. People are interested in expressive experiences, leading to critique and understanding.

Principles for Creativity Support Tools (CSTs)

Shneiderman (2007) posits principles for developing CSTs

- Support exploration.

- Low threshold, high ceiling, and wide walls.

- Support many paths and many styles.

- Support collaboration.

- Support open interchange.

- Make it as simple as possible—and maybe even simpler.

- Choose black boxes carefully.

- Invent things that you would want to use yourself.

- Balance user suggestions with observation and participatory processes.

- Iterate, iterate—then iterate again.

- Design for designers.

- Evaluate your tools.

Artists as Power Users

Artists are “creative power users” (Linda Candy in Shneiderman (2007)).

Artists show us the boundaries of human-computer interaction

Studying interactive art gives us insight into the potential of creativity support tools in the hands of experts.

This translates into findings about “everyday creativity” (Edmonds, 2018)

there are three levels of design: standard spec, military spec and artist spec… the third, artist spec, is the hardest (and most important) (Buxton, 1997)

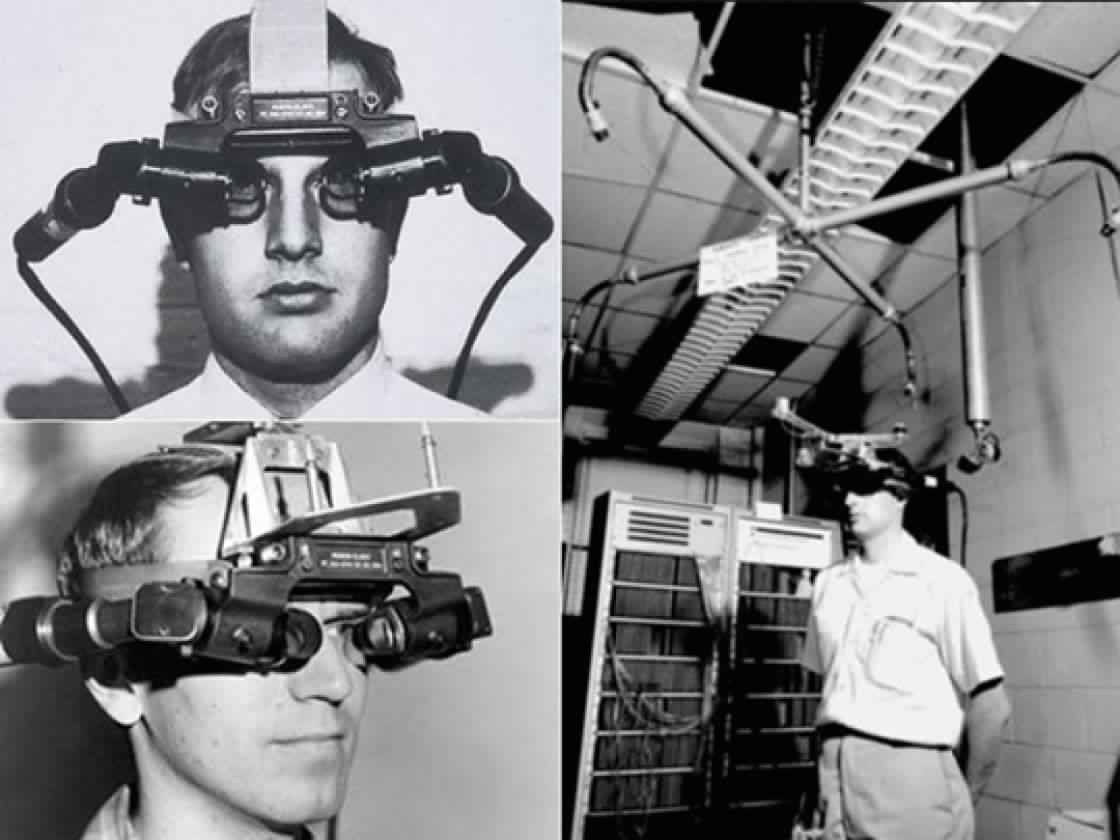

What is an expressive interaction?

Mapping sensed gestures to an expressive output that is fed back to the user.

- gestures: the use of motions by the limbs or body as a means of expression

- can be unintentional, control, or ancillary gestures

- from non-human actors (e.g.,the movement of a leaves on a branch of a tree)

- “any sort of motion, that may be understood as an expression of something”

The interaction itself is expressive, and the output is an expression as well. We consult Composing Interactions (Baalman, 2022) as a resource.

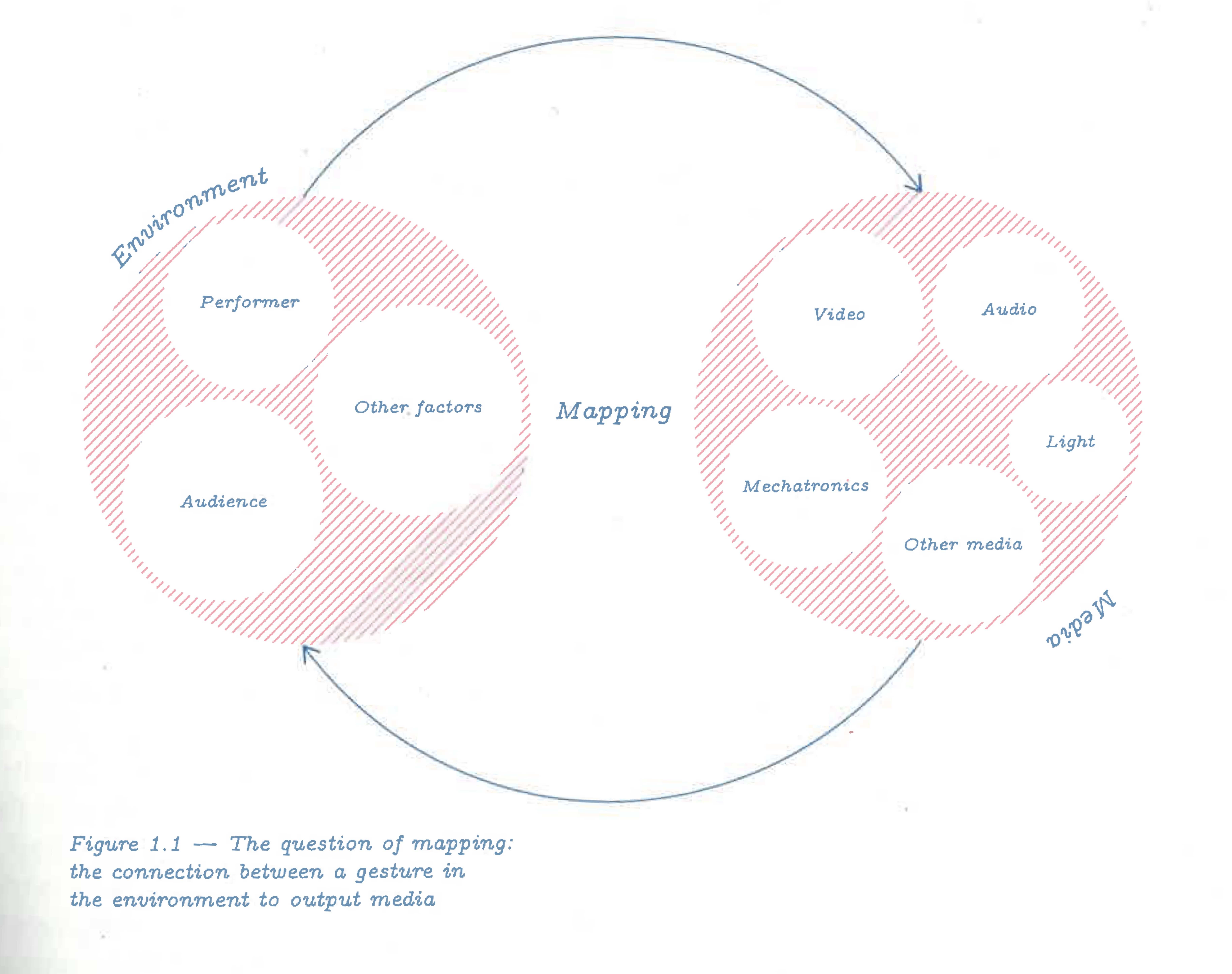

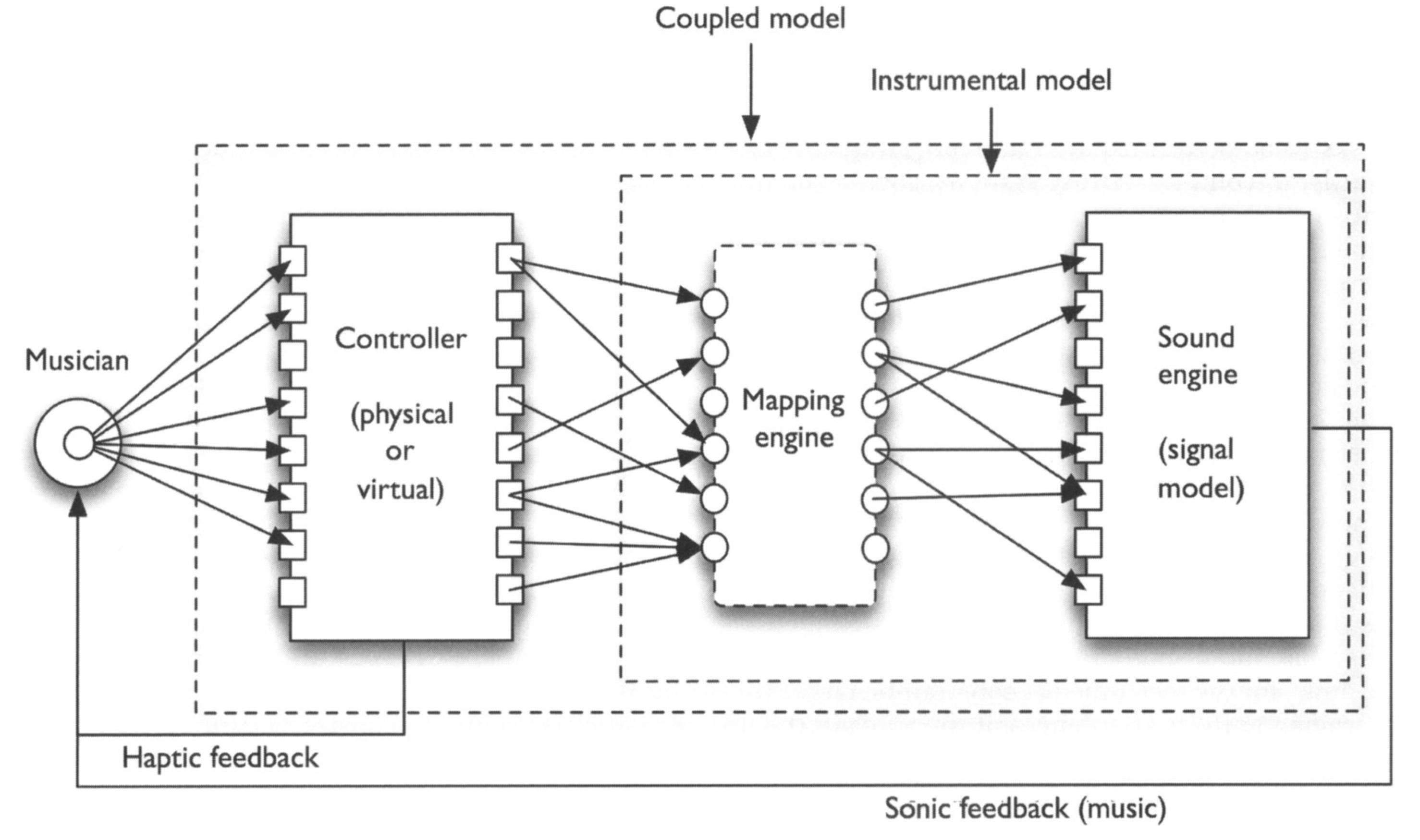

Mapping from Gesture to Output

Why is mapping an important consideration?

Considering a performer performing making gestures on a stage, which gestures effect changes in the output medium of sound, which can be heard by the performer and audience in the real, physical environment.

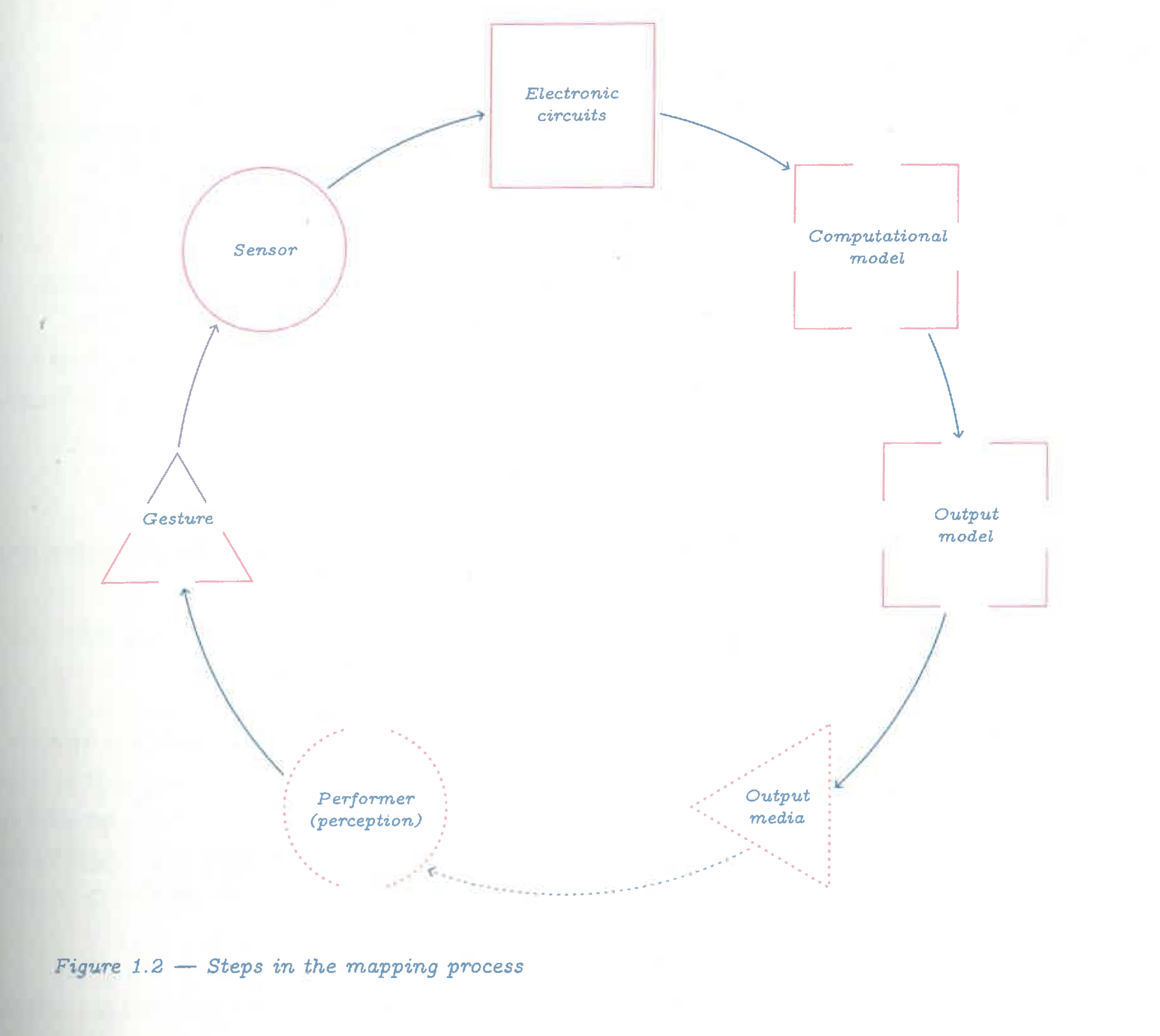

Steps in Mappings

Baalman (2022) expands the mapping process into a cycle.

- A gesture is performed in the environment;

- This is captured by a sensor that translates the gesture is processed by an electronic circuit, often to digitalise it;

- Next, the signal enters some sort of computational model that translates the data to parameters;

- These parameters control an output medium such as sound, light, video, or mechatronics.

how is output from one connected to input? what happens in each step?

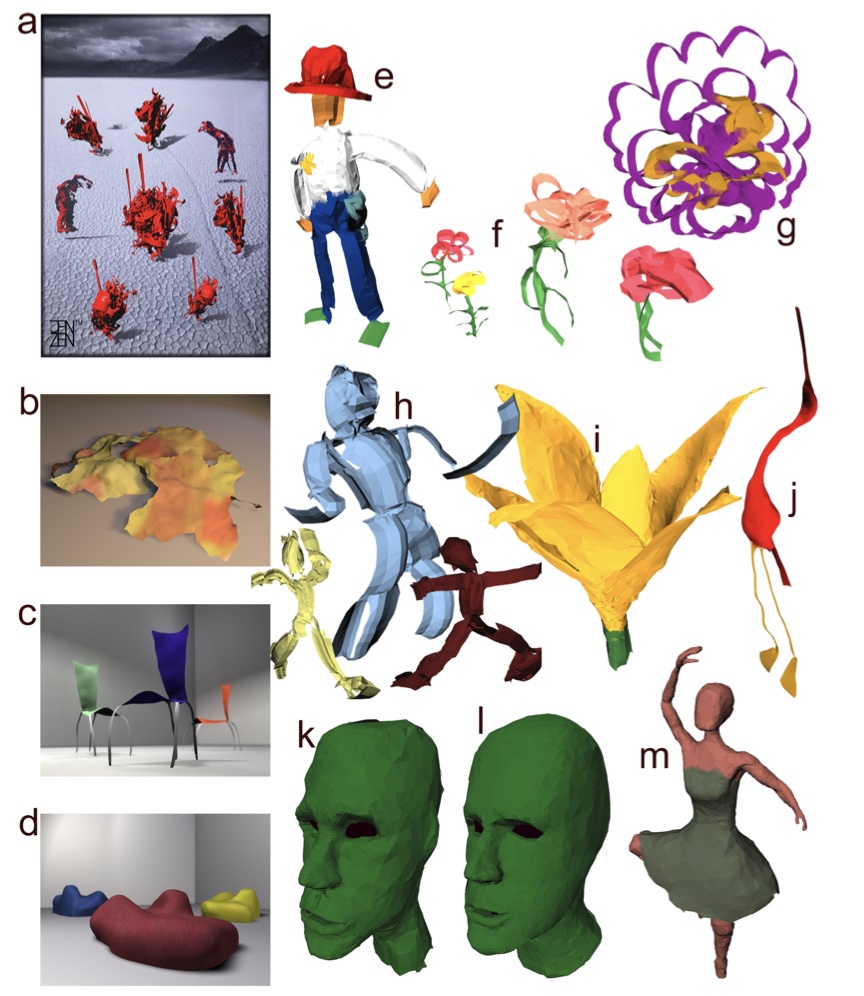

Drawing Interaction

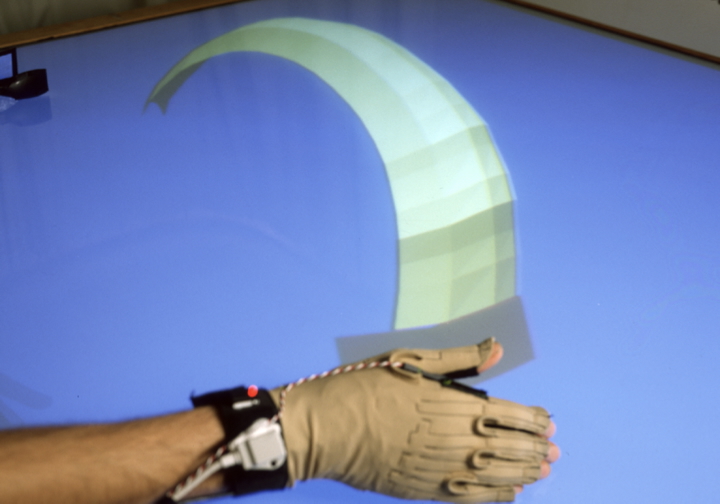

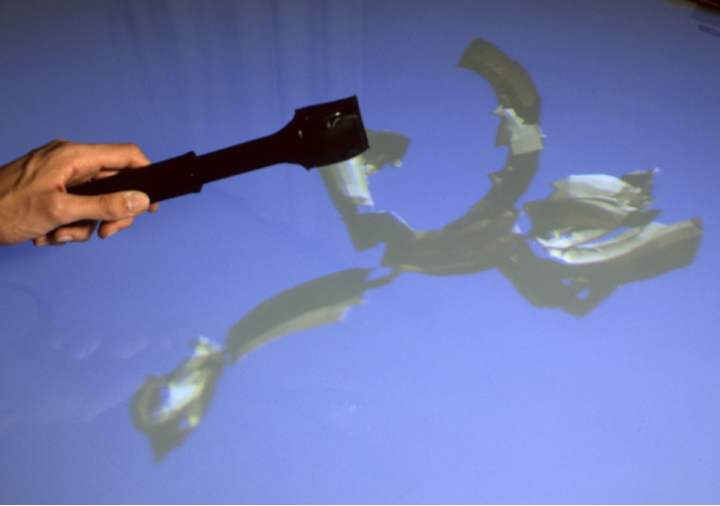

Surface Drawing System

Drawing with hands, moving, scaling, erasing (a pair of kitchen tongs) (Schkolne et al., 2001)

AirPens

- AirPens: Musical Doodling

- Make music with mark-making.

- Use IMU sensors to convert movement into sound; explore different mappings between movement and sound.

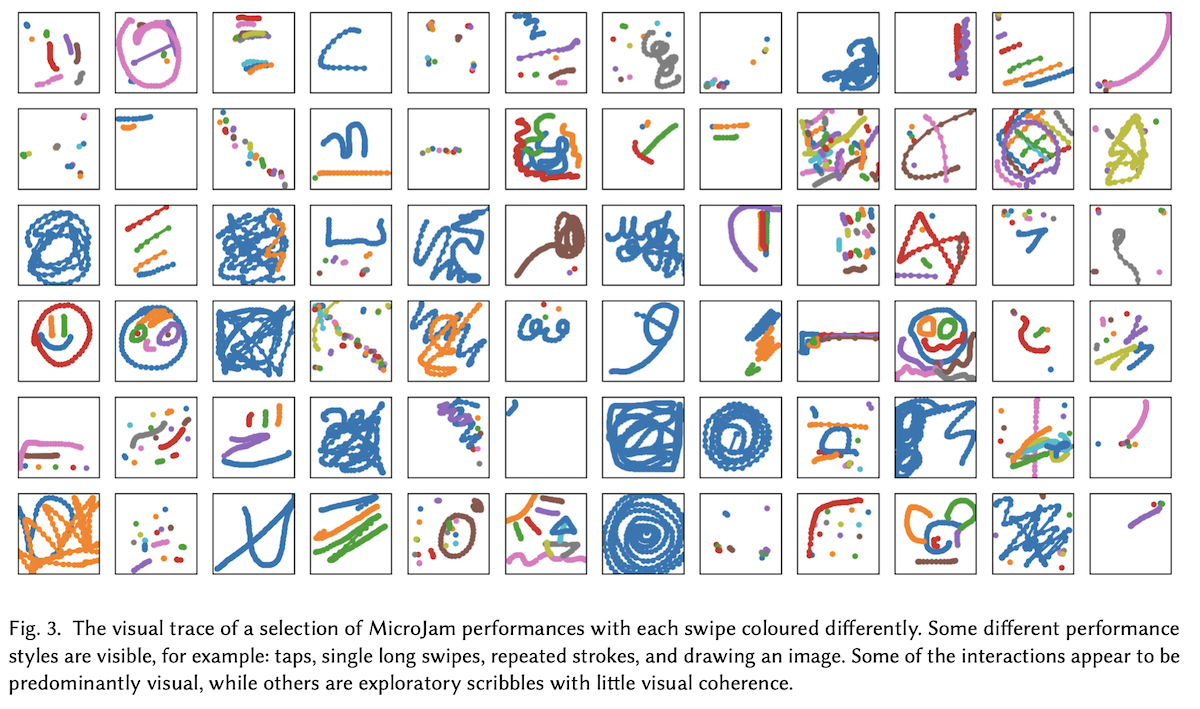

MicroJam

App for making short (5s) musical performances with a sketch (video and info). (Martin & Torresen, 2020)

- Uses touch location and movement to create sound.

- Replay performances by rewinding the sketch and viewing it again.

- Social media interface: view other people’s drawing and add layers as a musical “reply”

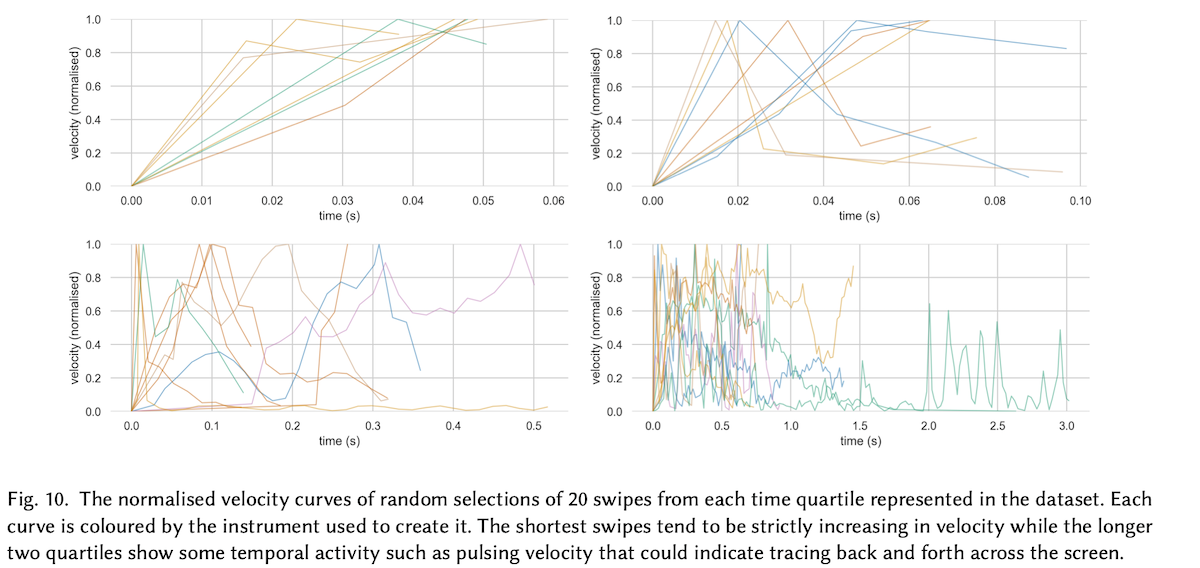

- Analysed 1600 tiny jams to understand musical drawing behaviour.

Music Interaction

The New Interface for Musical Expression (NIME) research community.

- Research into musical instrument design that explores how technological innovation can enable new musical expression, enhance performer control and intimacy, and shape the musician-instrument relationship.

- Digital Musical Instruments(DMIs): digital piano, drum pad.

- Augmented instruments: magnetic resonator piano (grand piano -> string instruments).

- Novel instrument: lady’s glove, magnetic AI instrument thales (Privato et al., 2023), percussive instrument PhaseRings (C. Martin, 2018), AR instrument cube (Wang & Martin, 2022).

PhaseRings: natural gestures on big touchscreens

How can we perform music on big touchscreens?

- Rather than re-implement music production apps, looked at natural gestures in long-term artistic practice.

- Lots of apps from 2011–2014 and lots of performances e.g., Martin (2016)

- Initially, sought to understand how percussionists would touchscreens (C. Martin et al., 2014)

- Then explored networked connections in ensemble performance (C. Martin et al., 2015)

- Then compared how networked ensembles supported by the interface (C. Martin et al., 2016)

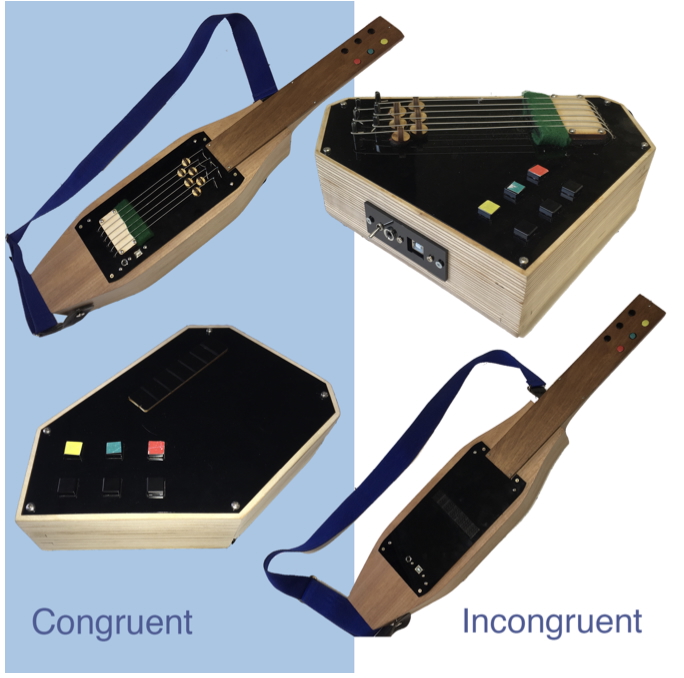

Hyperinstruments: non-guitar guitars

- When is a Guitar not a Guitar? (Harrison et al., 2018): novel instruments and controllers resemble traditional instruments.

- Four designs examining variation in form (held vs tabletop) and interaction (strings vs touch sensor)

- Guitarists prefer technical familiarity of stringed instrument (guitarists)

- Non-musicians prefer touch-interface: ease of use, cultural load of the guitar form

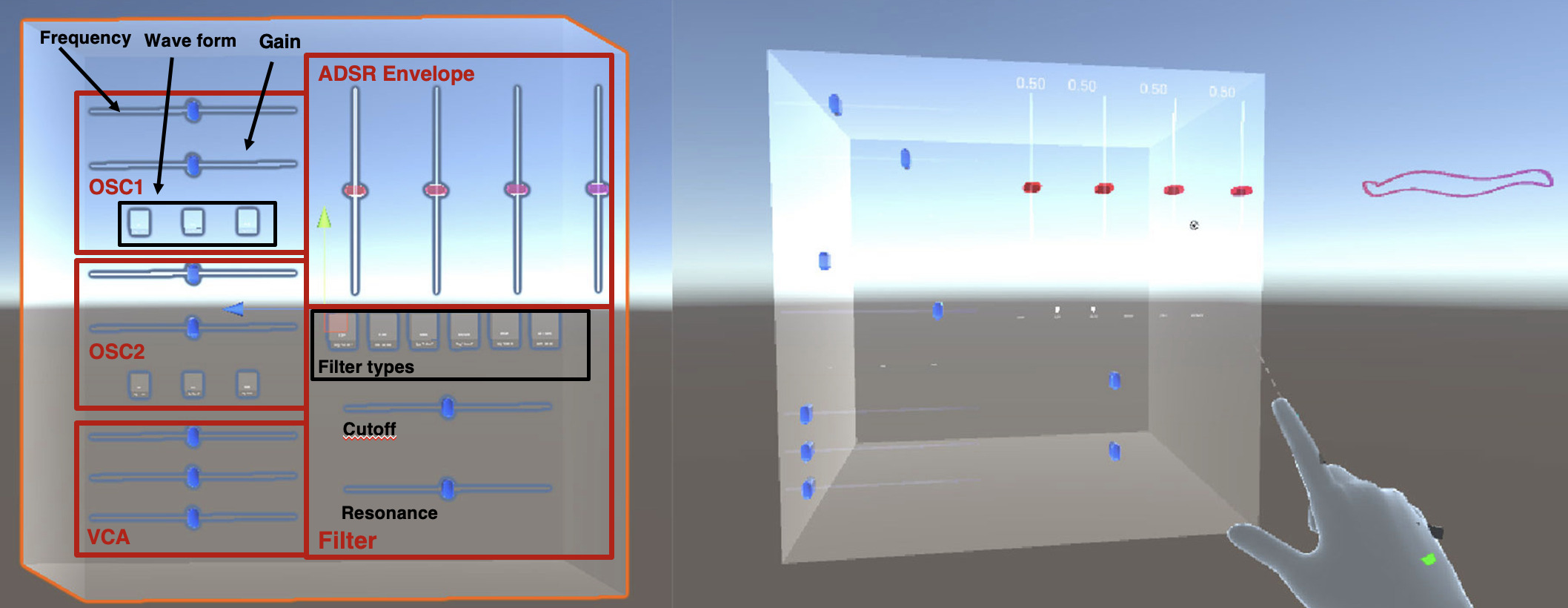

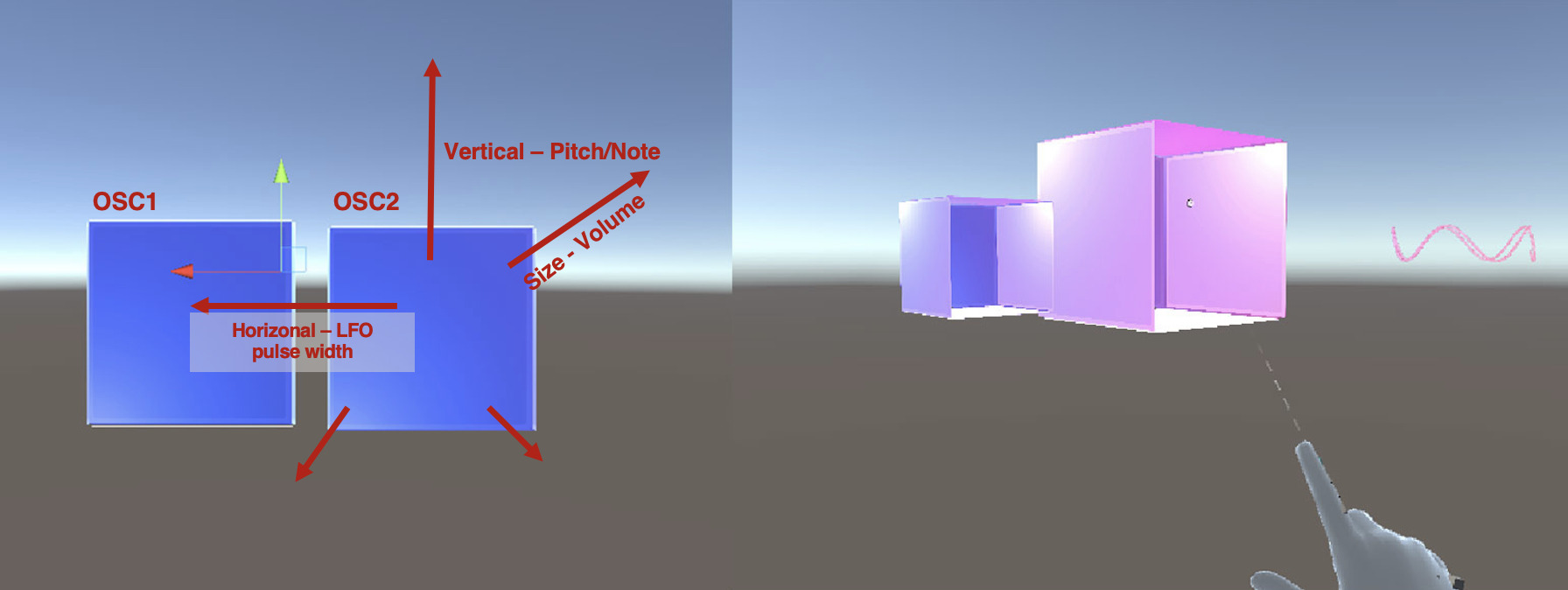

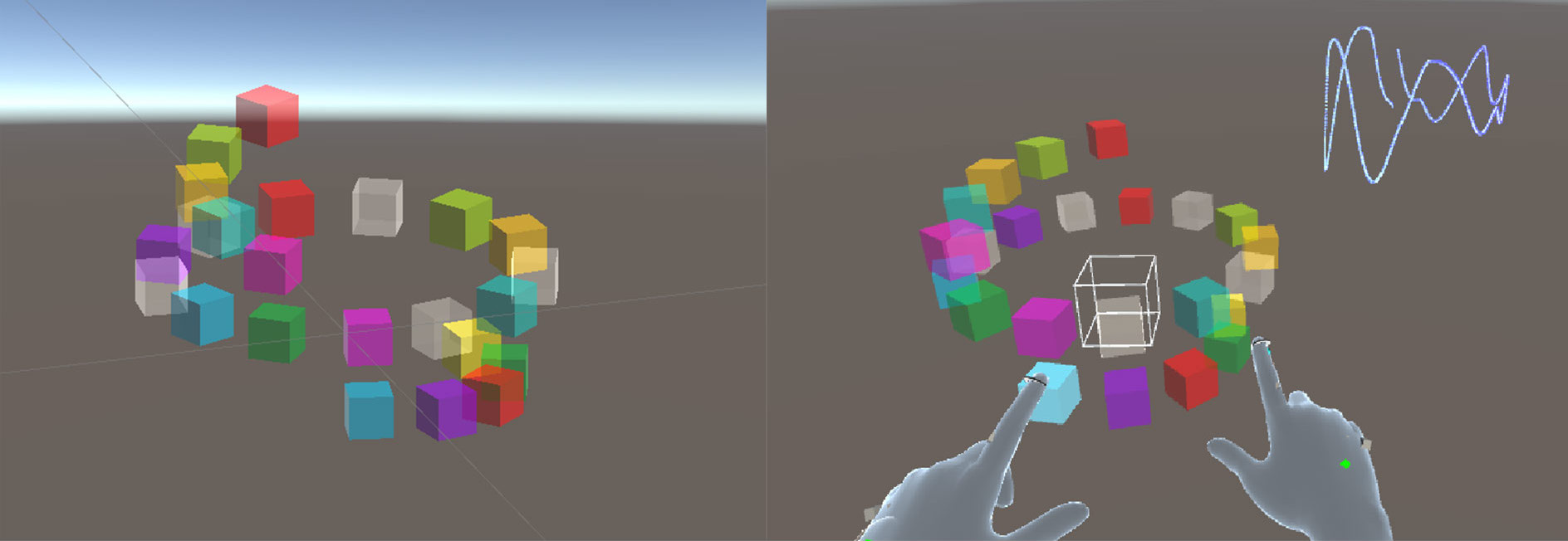

Authentic musical instruments for AR

cube system: authentic design for a head-mounted AR musical instrument (Wang & Martin, 2022)

Autobiographical design (Desjardins et al., 2021) to compare three interfaces candidates

- Physical interface: slow accurate manipulation due to hand tracking.

- Spatial interaction: emerged from bodily movement allowing ease of use.

- Flexible freehand interaction: allow multiple notes to be played simultaneously, taking advantage of the full-hand tracking affordance in the AR headset.

Gesture to Sound Mappings in Music

- Traditional approach: decompose input actions, sound production and feedback (Miranda & Wanderley, 2006; Wessel & Wright, 2002).

- New sounds, music, and experiences: expanded control over timbre and texture, richer and more diverse musical experience (Magnusson, 2010; Wessel & Wright, 2002).

- Control intimacy: mediated gesture and sound relation (Wessel & Wright, 2002), a limit on machine-centre approach(Pigrem & McPherson, 2018).

- Current research: finding better frameworks. Performer and instrument engage in a mutually influential relationship during the design process (McPherson et al., 2024).

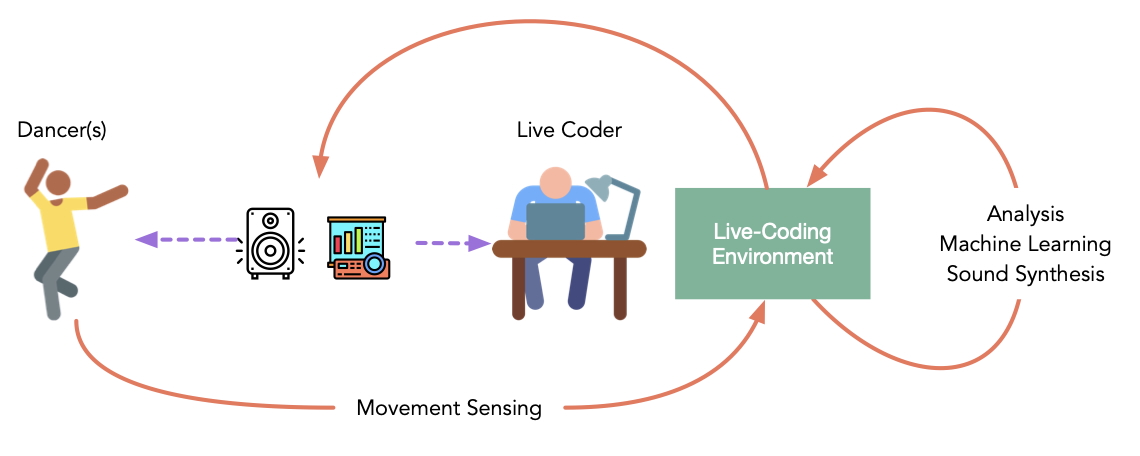

Dance Interaction

From gestures to body (embodied) movements.

- Support real-time manipulation of continuous streams of the dancers’ motion data for interactive sound synthesis.

- Enable novel dance improvisations through live coding.

- Live coding: interactively programming musical or visual processes as performance.

Co/da system

- Movements are measured using motion sensors, and the live coder processes motion signals to generate feedback in real-time.

- Enable a multitude of feedback loops: sound feedback -> movement improvisation -> the coder alters the relationships between movement and sound.

- Dynamic improvisation that stimulates novel movements’ exploration.

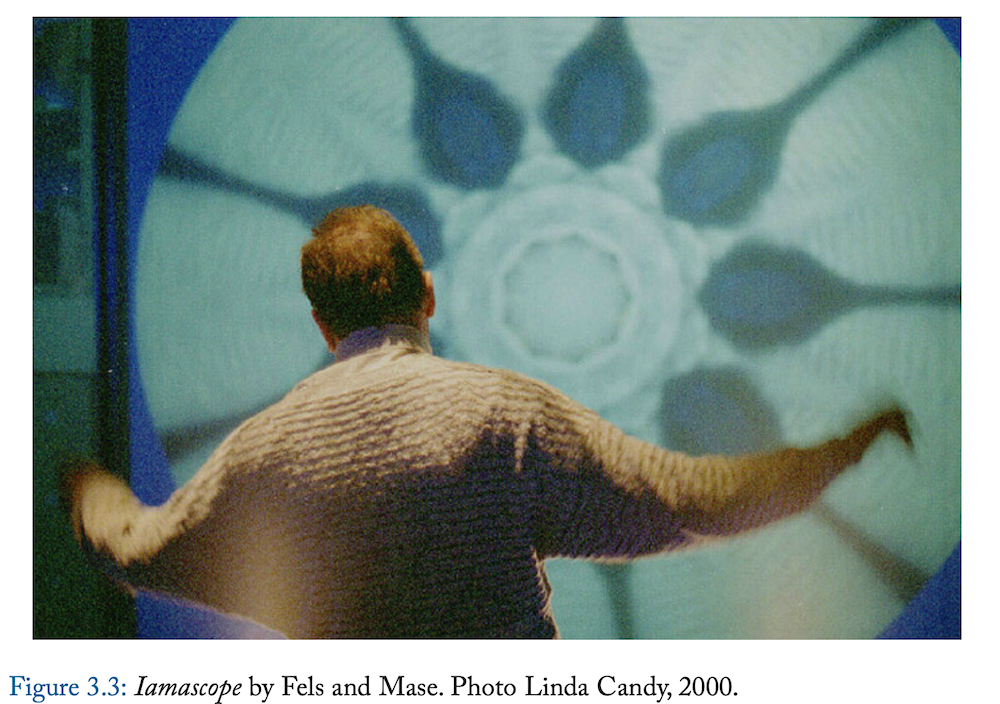

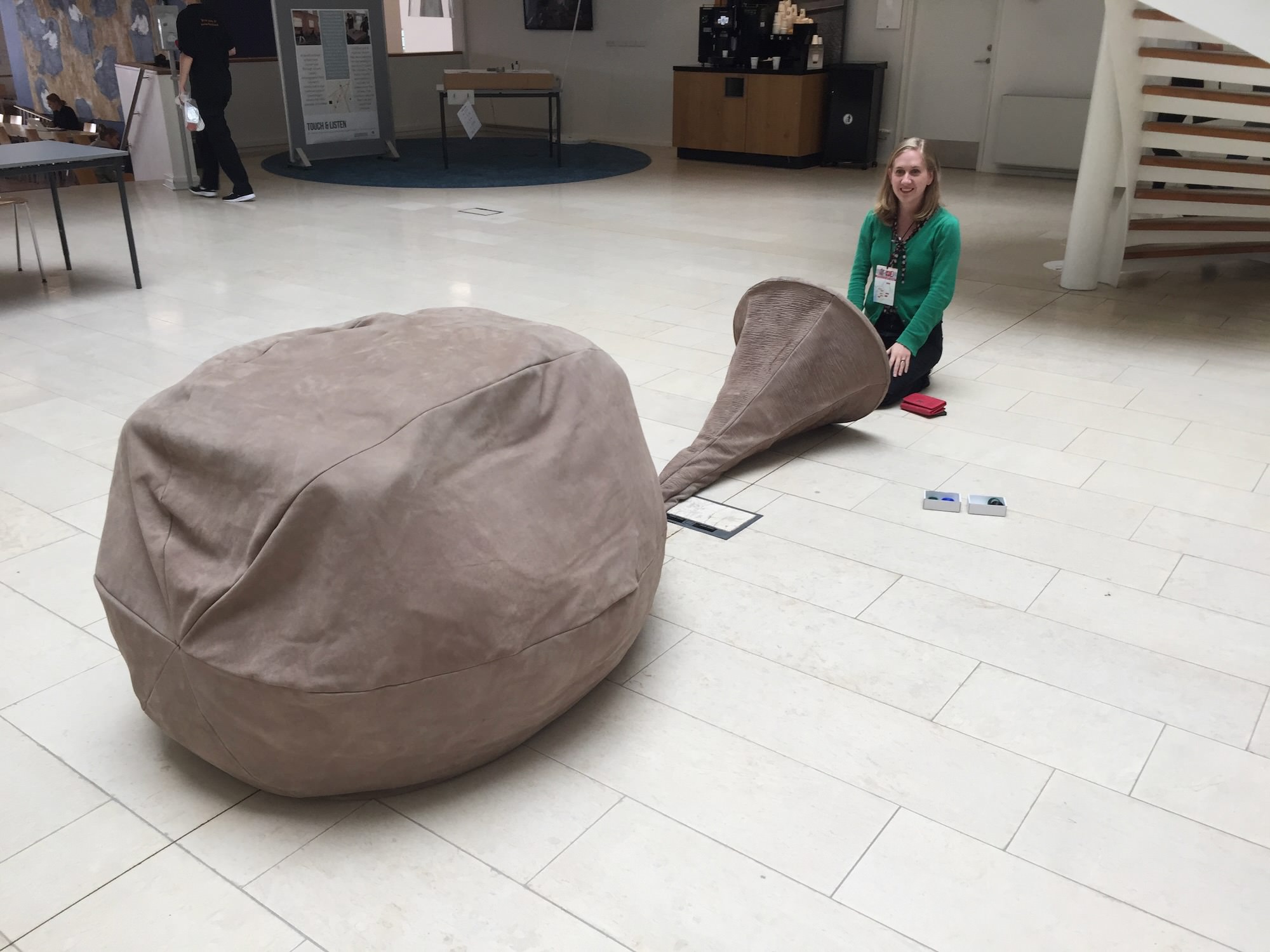

Installed Interactions

Putting expressive interactions into public places.

Dinosaur Choir

Dinosaur Choir: Adult Corythosaurus

- Singing dinosaur skull musical instruments.

- Experience dinosaur vocalisation: imagine dinosaur vocal anatomy from a bird syrinx, (vocal structure open question in paleontology)

- Microphone for user, computational vocal model, sound resonates through a 3D printed replica of the dinosaur’s nasal cavities and skull

- Change the pitch and timbre of the vocalisation by changing the shape of the mouths, like trumpet player

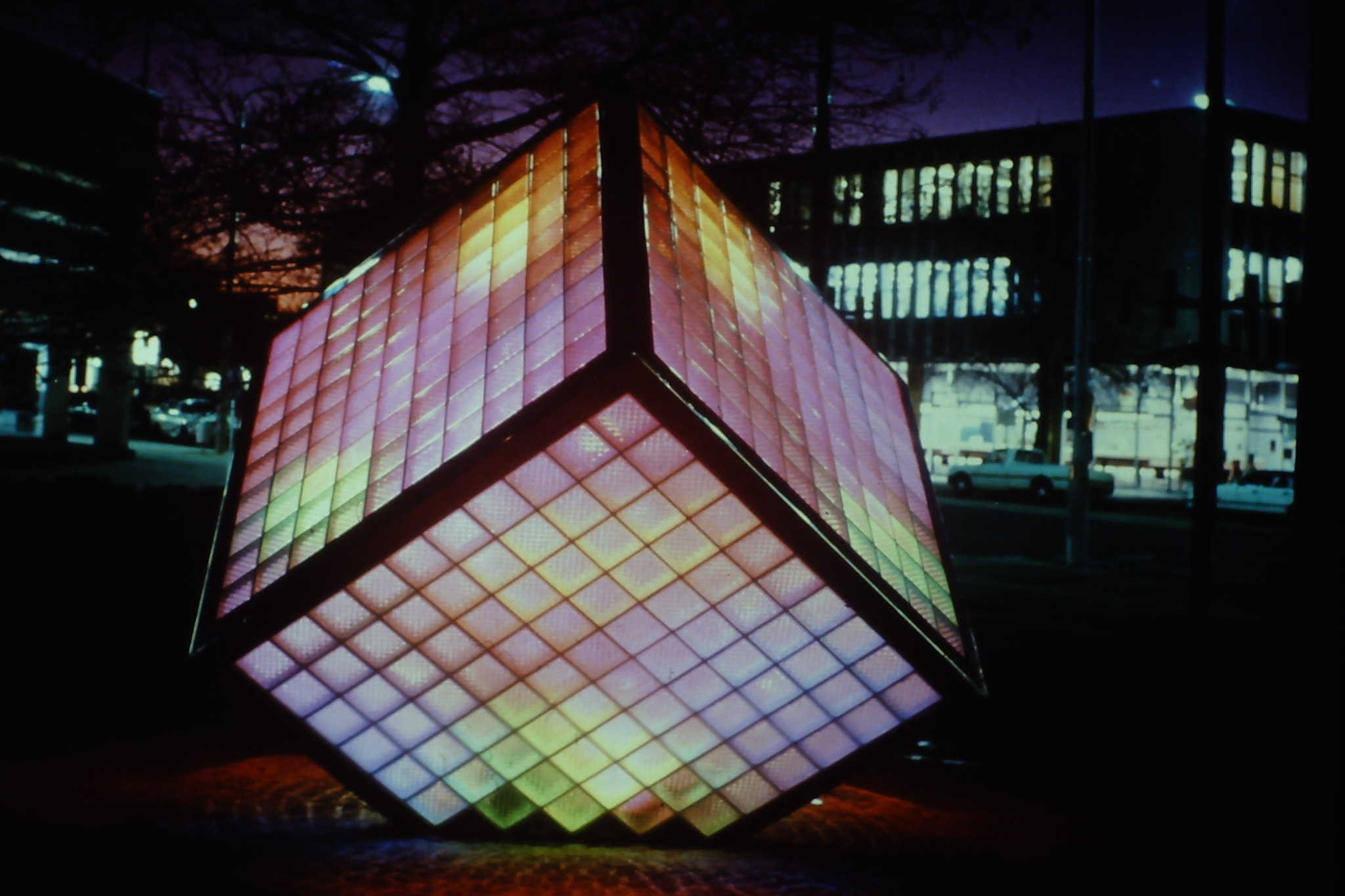

Illumicube (in Canberra!)

- Interactive sculpture in Canberra by Kerry Simpson (1988)

- Glass and sound (now movement) activated lighting

- Location: Ainslie Avenue, Canberra

Playful installed interaction can lead to unwanted behaviour! Noise from folks exiting civic pubs!

Playful Interaction

Can silly or playful ideas turn into interesting interactions?

Can we use play to examine more serious HCI concepts?

It’s fun to make the world more fun.

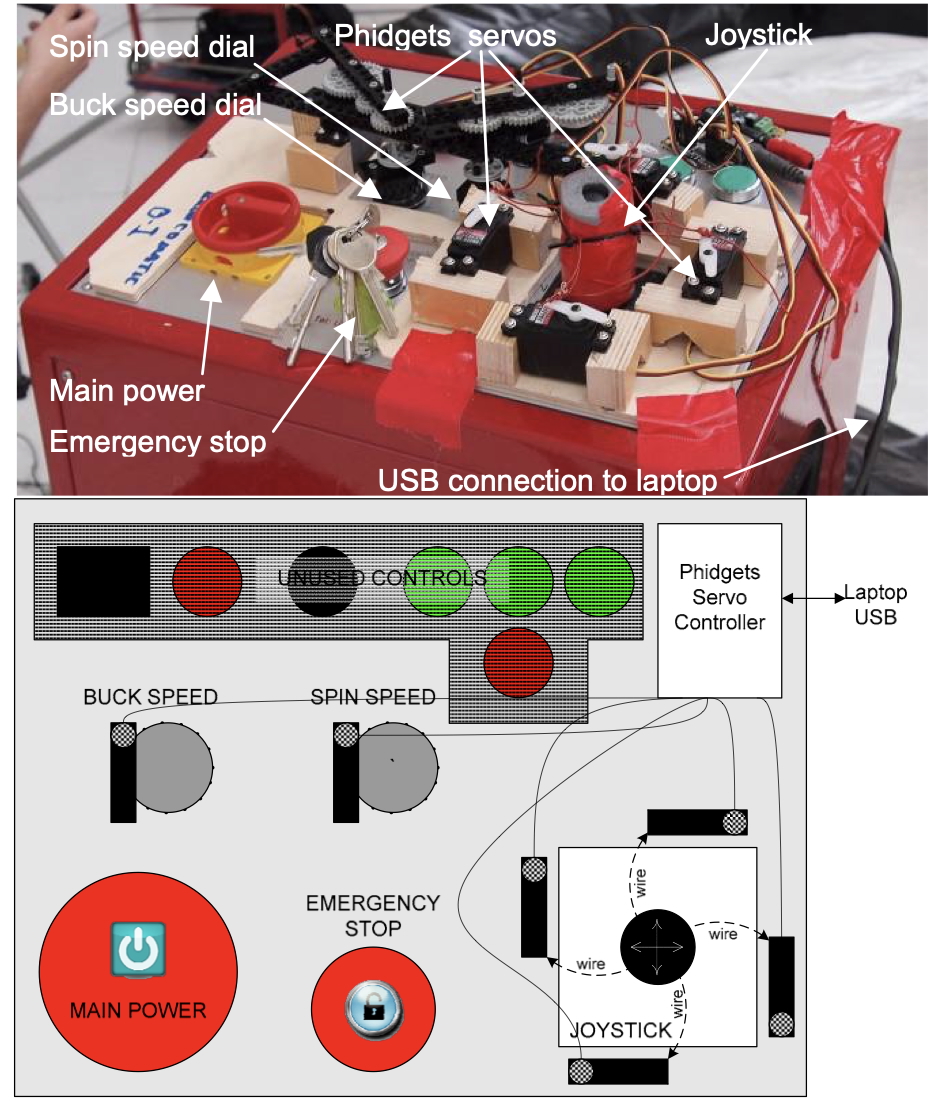

Breath Controlled Amusement Ride

Can an amusement ride be controlled by breath?

- Inspired by robotic technologies for control of individual seats on rollercoasters and other thrill rides.

- the Broncomatic is a bucking bronco game (horse riding; you try to stay on!)

- The twist is it’s controlled by the user’s breath

- the ride kicks you around and if you lose control it kicks harder (until you fall off)

“Breath Control of Amusement Rides” (Marshall et al., 2011)

More on the Broncomatic

- A straightforward mapping: the rider’s breathing to the horizontal

rotation of the ride.

- Inhale: spins clockwise; exhale: spins anti-clockwise.

- Breathing speed controls rotation speed: fast breathing faster spin; holding breath stops spinning.

- Difficulty levels.

- The program is a game in which the player scores more points the

more that they breathe: a physical challenge vs reward dynamic.

- More breathing for faster ride but harder to stay on. To score high, you must breathe more, but this makes the ride tougher.

Human-AI Creative Interaction

Interest incorporating AI into creative interaction since computing began.

Recent work often focuses on current genAI breakthroughs, e.g. Autolume visual generator system and Reprising Elements performance (2023)

Why introduce AI into expressive interaction?

Computational creativity helps create new ideas in three ways (Boden, 1998)

- Produce novel combinations of familiar ideas;

- Explore the potential of conceptual spaces;

- Make transformations that enable the generation of previously impossible ideas.

Creativity and technology: a sociotechnological perspective (Bown, 2021).

- The social nature of human behaviour.

- Artistic behaviour is social in nature (is it?)

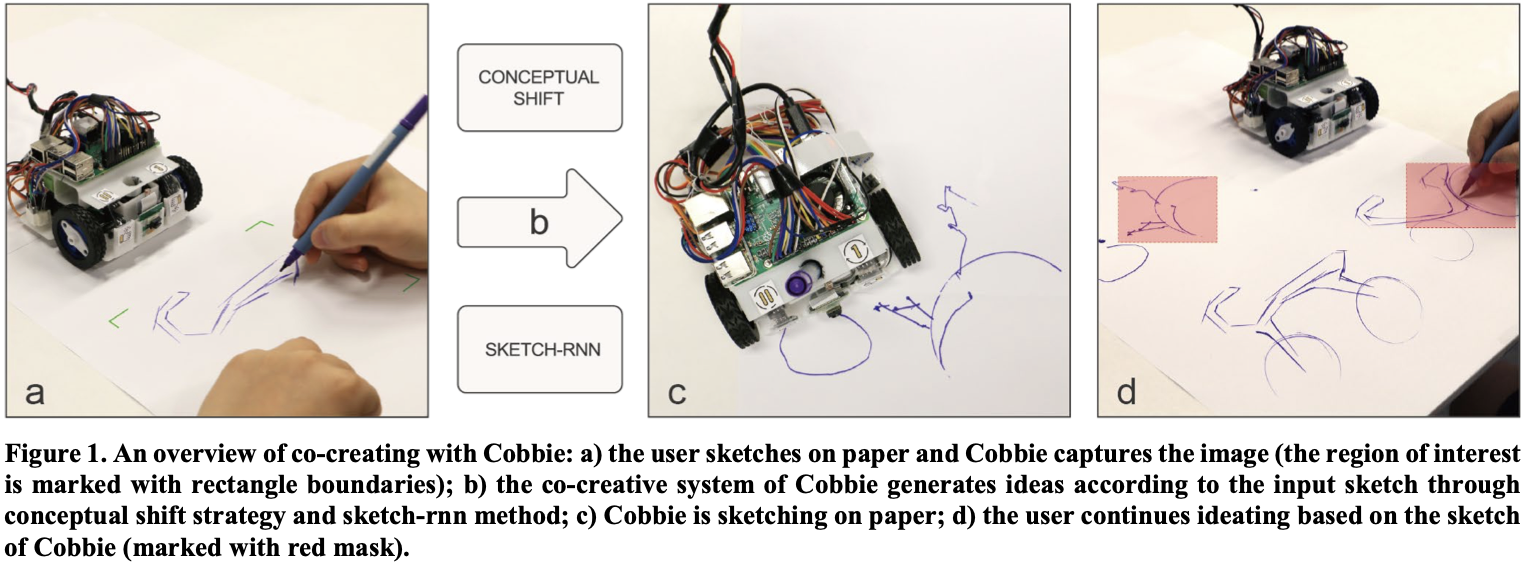

Cobbie: co-creative robots

Cobbie system (Lin et al., 2020)

- Motivation: Co-creative partner can reason about user’s intention and stably present novel ideas with the user initiative, which are hindered in human team due to social loafing or a resolute partner otherwise.

- Take turns to draw ideas, give the dominant position to the user, and use movements and sound feedback for communication.

- Three human-robot interactions: your turn, pause and draw again, progressing with feedback

Holographic dancing ghost

- Co-creative public dancer (Long et al., 2019; Trajkova et al., 2023, 2024)

- Explore the design of the modular AI agent to creatively collaborate with a dancer.

- A Kinect motion capture device to detect the user’s motion, visualised as a virtual shadow on a projection screen.

- The humanoid agent shadow dances by analysing the user’s movement and responding with a movement that it deems to be similar in terms of parameters such as energy, tempo, or size.

- Study results showed in-the-moment influences, self, partner, environment(Trajkova et al., 2024).

Recording a video in Powerpoint

For the final project you need to record an upload a presentation video. The specification is:

- must be no longer than 5.5 minutes (330 seconds)

- must be no larger than 1920 x 1080 pixels.

- must be narrated with your voice

- must show video of you speaking

You can do this easily with Powerpoint, so let’s give it a try.

Questions: Who has a question?

Who has a question?

- I can take cathchbox question up until 2:55

- For after class questions: meet me outside the classroom at the bar (for 30 minutes)

- Feel free to ask about any aspect of the course

- Also feel free to ask about any aspect of computing at ANU! I may not be able to help, but I can listen.