Evaluation

Dr Charles Martin

Announcements

- assignment 2 due next Monday

- remember to use your tutorial time for meeting research clusters and collecting data.

- remember to follow the step-by-step guide.

- go fork the repo!

Markdown Formatting Check: There is a CI/CD job that

checks your markdown formatting using the markdownlint-cli

tool. Syntax rules are listed here

in our script, rules MD013 and MD041 are

disabled. All other rules are active.

Plan for the class

- research questions

- about evaluation

- types of evaluation

- planning evaluations

- evaluation by inspection

Research Questions

For the final project you will have to choose a research question to explore.

This is a clear question (one sentence) that guides the design of your research project.

RQs have been called survival beacons because they should guide all aspects of our research plans.

How do we choose a research question and write it clearly?

Important skill for any research activity.

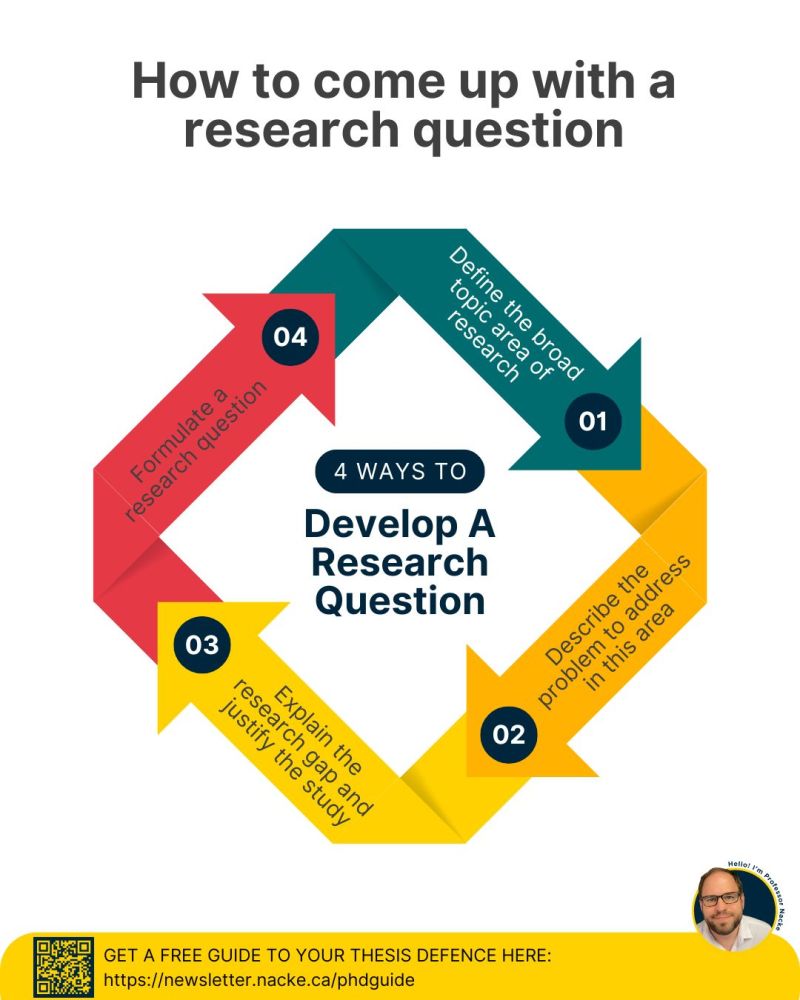

Four steps to write a research question

This framework inspired by Lennart Nacke, everybody’s favourite HCI writing coach on LinkedIn.

- Outline a broad area of interest

- Identify a problem that needs solving

- Justify solving this problem

- Write the question

To be clear, a research question starts with a question word (what, how, why, can, do, should) and ends with a question mark. It can just be one sentence.

Seems too easy… let’s try it together.

A worked research question example

- haptic wearable interfaces.

- keeping focussed in complicated meetings

- lack of awareness in meeting can lead to poor work performance and embarrassment

- here we go:

What effects can a haptic wearable interface have on lack of awareness during meetings and later work performance?

Encodes the broad area, the problem, the justification, the context, etc.

Research Question Bingo

Interfaces:

- haptic feedback gloves

- AR/VR headset

- e-textile clothing

- voice assistant

- gesture recognition

- smart headphones

- ambient light display

- wearable plants

- eye-tracking interface

- multi-touch table

Problems to Solve:

- family meal planning

- language learning while commuting

- caring for houseplants

- focus during remote work

- medication schedules

- teaching kids about conservation

- organising hobby collections

- non-verbal communication

- practicing music in small spaces

- tracking community events

Activity: Write a Research Question

Let’s write a research question!

Together, let’s Spin the wheels to decide on a broad area and a problem.

Then, decide on a “justification” and write a research question.

Remember that the RQ should include the broad area, the problem, and the justification.

Use the poll everywhere link to suggest research questions and vote on the best ones.

Write for 2-3 minutes, vote for 1 minute, then let’s discuss.

About Evaluation

What is evaluation?

Evaluation: collecting and analysing data from user experiences with an artefact.

Goal: to improve the artefact’s design.

Addresses: functionality, usability, user experience

Appropriate for all different kinds of artefacts and prototypes.

Methods vary according to goals.

Why is evaluation important?

- Understanding people

- Users may not have the same experiences or perspectives as you do

- Different users use software differently

- Understanding designs

- Proof that ideas work

- Understand limitations, affordances, applications

- Business

- Invest in the right ideas

- Find problems to solve (before production, before next iteration, etc.)

- Research

- Evidence for new interactive systems

- Empirical proof of hypotheses

- New knowledge to answer research questions

What should you evaluate/measure?

Does the design do what the users need and want?

Examples:

- Game App Developers: Whether young adults find their game fun and engaging compared to other games

- Government authority: Whether their online service is accessible to users with a disability

- Children’s talking toy designers: Whether six-year-olds enjoy the voice, feel of the soft toy, and can use safely

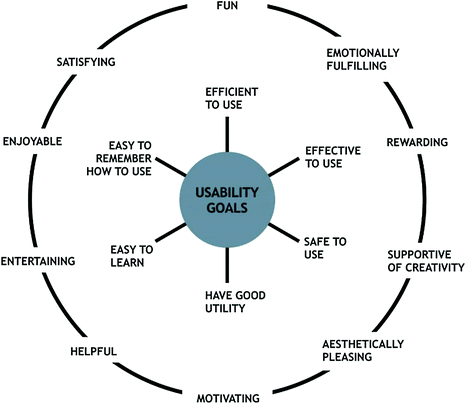

Usability and Usability Goals

Six usability goals:

- Effective to use (effectiveness)

- Efficient to use (efficiency)

- Safe to use (safety)

- Having good utility (utility)

- Easy to learn (learnability)

- Easy to remember how to use (memorability)

Where should you evaluate your design?

Depends on your evaluation goal!

- Lab studies (controlled settings)

- In-the-wild studies (natural settings)

- Remote studies (online behaviour)

Formative vs Summative Evaluation

Evaluation serves different purposes at different stages of the design process

- Formative evaluation:

- Assessing whether a product continues to meet users’ needs during a design process

- Early or late stages

- Summative evaluation:

- Assessing whether a finished product is successful

- Feeds into an iterative design process

Types of Evaluation

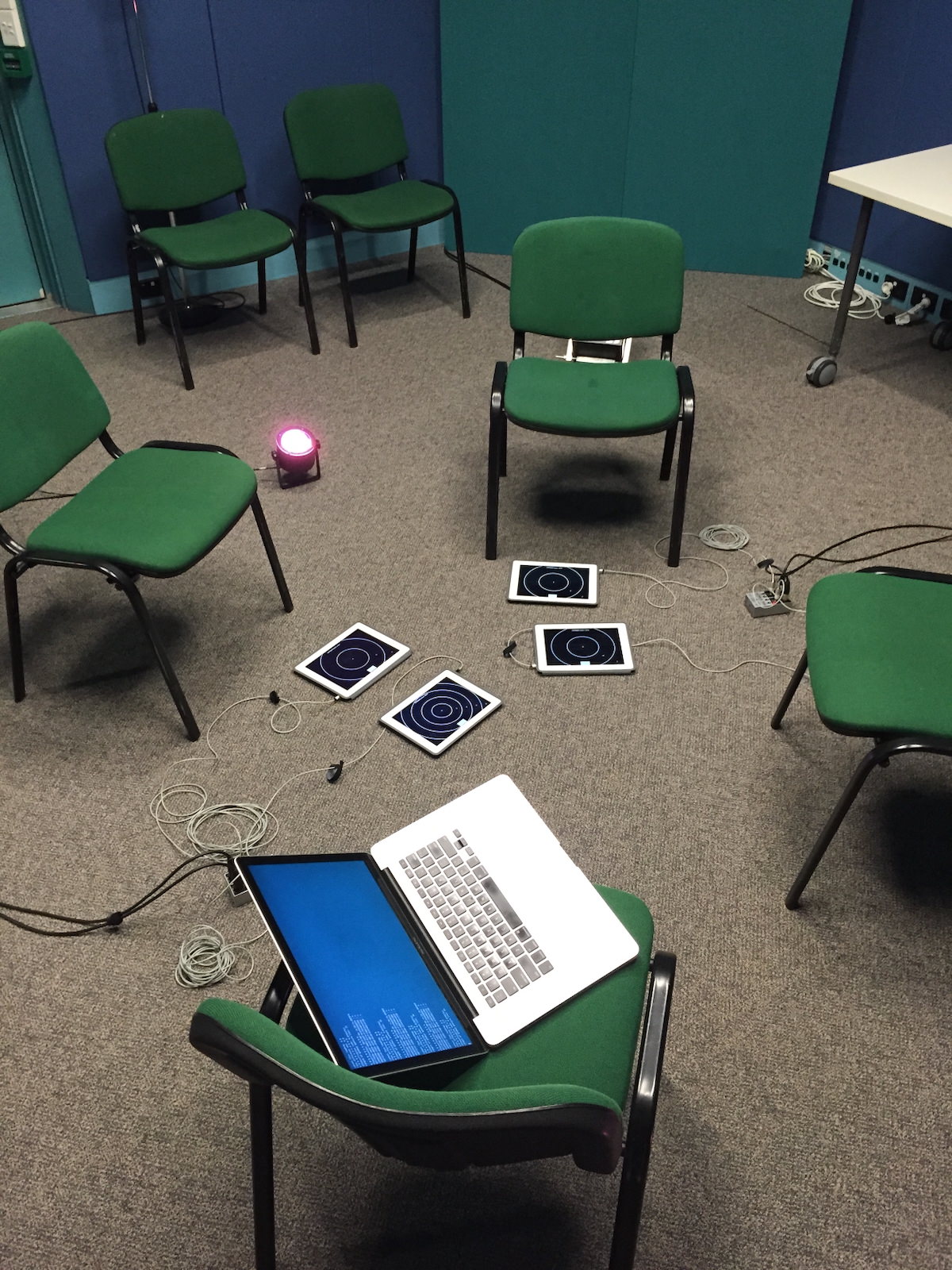

Controlled settings (e.g., Usability testing)

A controlled evaluation setting is not the normal place for using a technology or for the user to be.

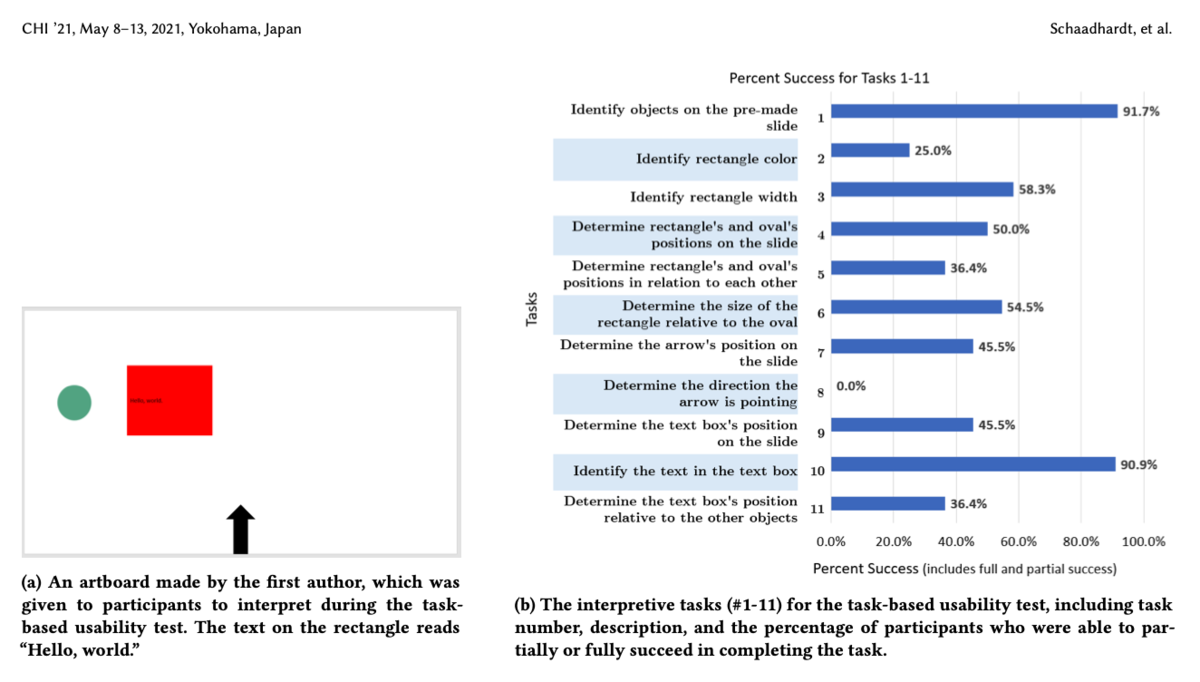

Usability Testing

- Measures: numbers or time (e.g., tasks completed, errors made, time taken)

- Methods: mixture of methods (e.g., think aloud, observation, interviews, questionnaires, data logging and analytics)

- Data: variety of data depending on the methods (e.g., video, audio, facial expressions, key presses, verbal feedback)

- Settings: lab + observation room, mobile usability kit, university classroom

- Number of participants: 5-12 baseline but more is better

Usability Testing Example

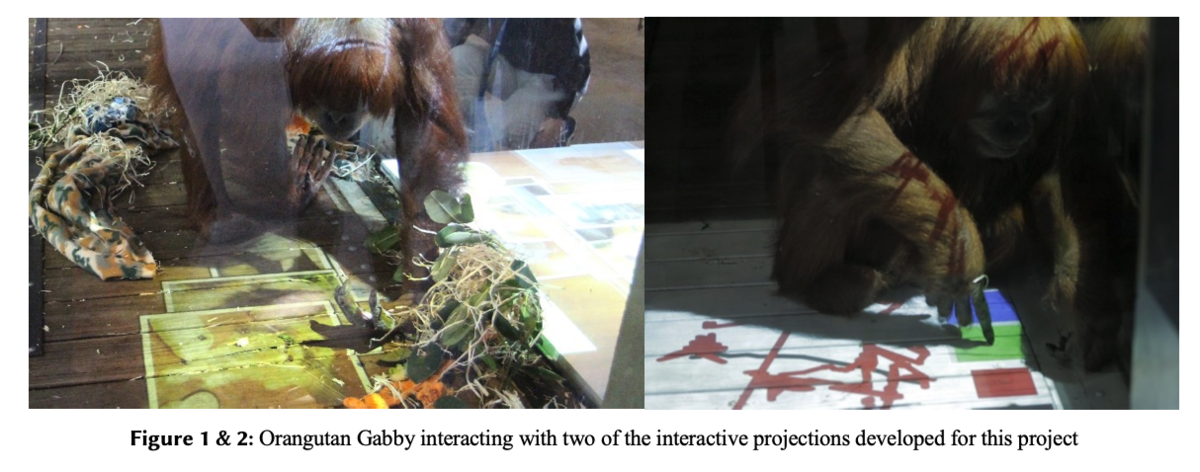

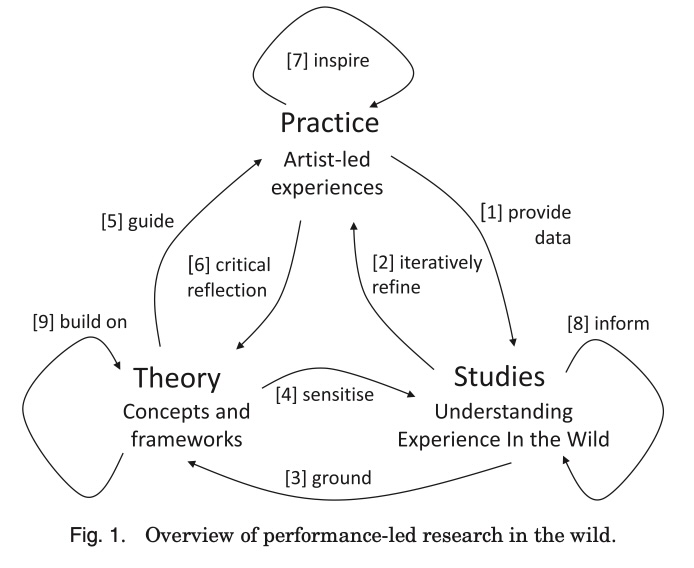

Natural settings (e.g., Field studies)

Evaluating a technology or context of use in the normal setting for the user.

Field studies can:

- Help identify opportunities for new technology

- Establish the requirements for a new design

- Facilitate the introduction of technology or inform deployment of existing technology in new contexts

Helps to establish ecological validity.

Field Studies

- Goals:

- Understanding how people interact with technologies in “messy worlds”, how technologies will be integrated into contexts

- Studying use of existing technologies and impacts of introducing new ones

- Methods: Emphasis on qualitative methods rather than statistical measures e.g., Observations, interviews, diaries, interaction logging

- Duration: No fixed length- can be seconds, months, years

- Paying attention to: Use situations, problems/errors, distractions, patterns of behaviours

- How does your presence and involvement shape engagement? Observation vs participant observation

- Findings: Used for creating thematic analysis, vignettes, narratives, critical incident analysis etc.

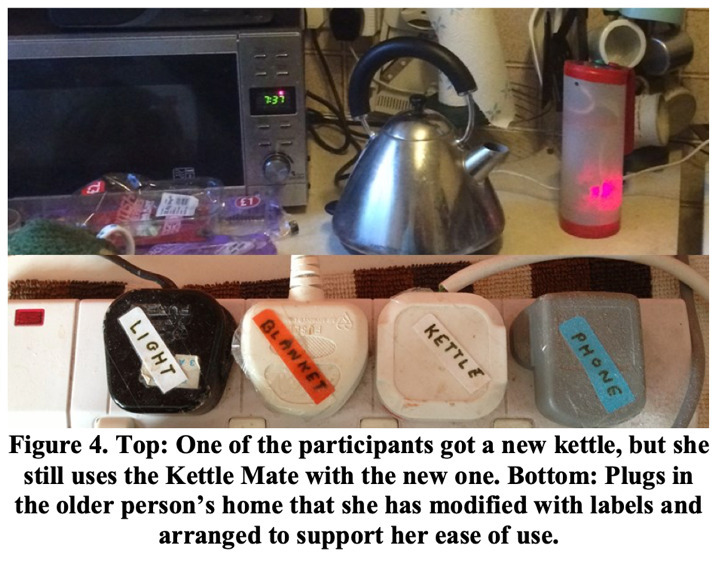

Field Studies Example

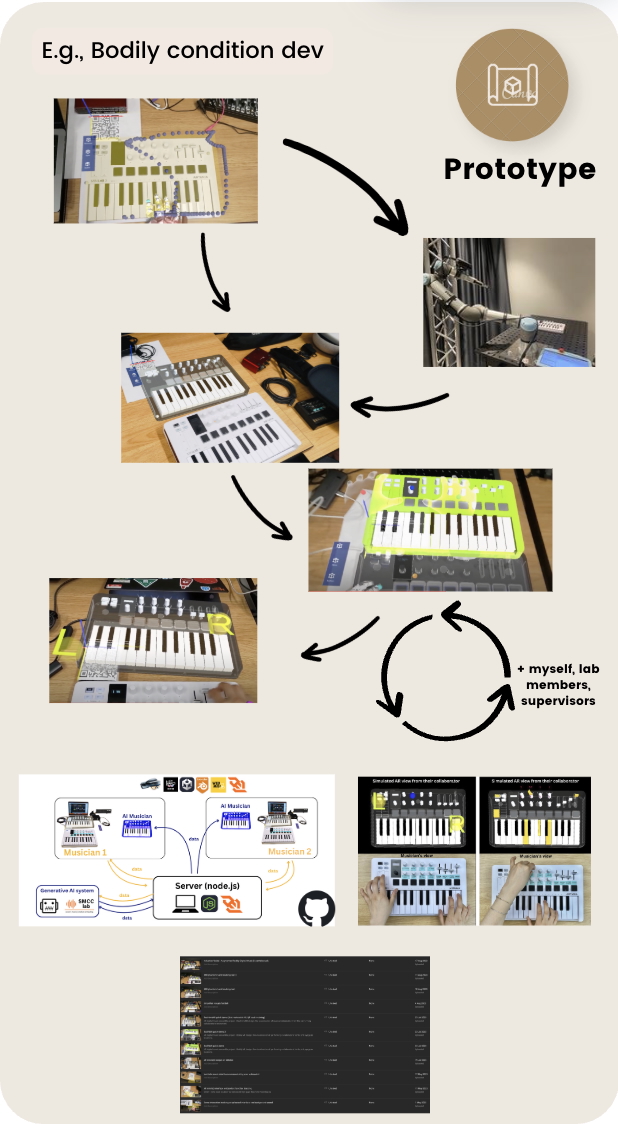

Opportunistic Evaluations

- quick feedback about a design idea in the early design process

- confirm whether it’s worth developing an idea into a prototype

- informal and doesn’t require lots of time or resources

- not a replacement for formal evaluation

- care required with ethics in research (Hons, Master, PhD). Asking supervisors and colleagues for advice vs collecting data to establish findings.

E.g., designers ask colleagues for design feedback: Yichen Wang’s arMIDI system early design process with supervisor and colleagues (Wang et al., 2025).

Which methods to choose from?

The evaluation setting guides certain dimensions of developed artefacts.

- Combinations of methods are used for a richer understanding. E.g., usability testing is combined with observations to identify usability problems and how users use the system.

Pros and Cons

- Controlled settings allow hypotheses testing on specific features for generalised results.

- Uncontrolled settings offer unexpected insights into perception and experience of new technologies in daily life and work.

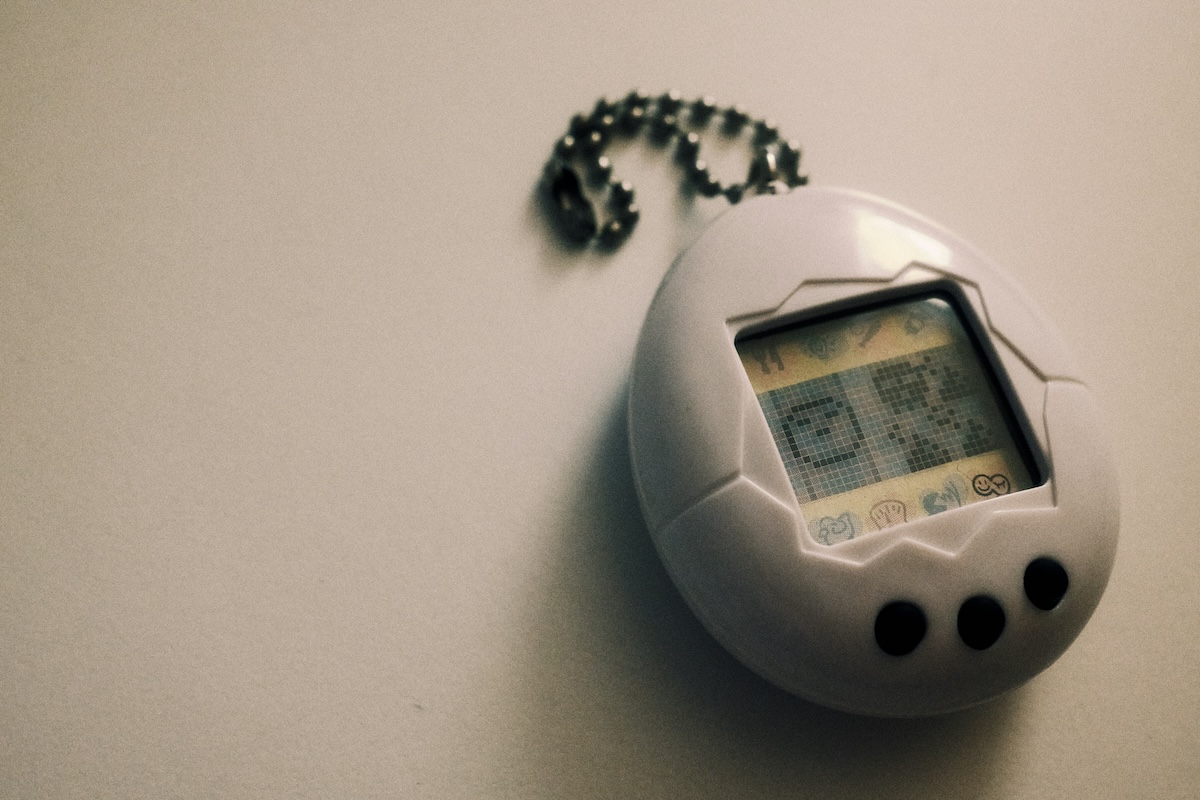

Activity: Evaluating an interactive toy

You’re all HCI researchers and we need to evaluate this interactive toy.

We need to choose:

- how we will evaluate the toy?

- in what environment?

- what information do we need and why?

- what research questions are being asked?

Talk for 2-3 minutes and then we will hear some answers 🗣️🎤⭐️

Planning Evaluations

What do we need to keep in mind to plan evaluations?

Design and Conduct Issues

- Reliability: how well it produces the same results on separate occasions under the same circumstances

- Validity: whether the evaluation method measures what it intended to measure

- Ecological validity: how the environment in which an evaluation is conducted influences or distorts results

- Bias: occurs when the results are distorted

- Scope: how much of the findings can be generalised

Ethical Issues

- Risks: what are the risks to participants? (e.g.,

physical harm, reputational risk, distressing conversations, being

identified etc)

- …and how are risks mitigated…

- Benefits: what are the benefits to participants? (e.g., none, helping research, fun experience, getting paid, course credit, etc)

- Consent: how is informed consent established? (e.g., a participant information sheet and a written form)

- Data: how is data stored and who has access to it? what will happen to it over time?

Universities have processes to approve the ethical aspects of research that collects data from humans following established rules (National Health and Medical Research Council (NHMRC) et al., 2025).

We don’t go deeply into research ethics in this course but the four issues above are the core ones.

Developing an evaluation plan

- Evaluation Goal/Aims

- Participants

- Setting

- Data to collect

- Methods

- Ethical Considerations and Consent

- Data capture, recording, storage

- Analysis method

- Output(s) of evaluation process

Labs and Equipment

- tables, chairs

- places for participants and researchers

- Instructions to participants

- Details, equipment for completing tasks

- Data collection equipment: video, audio recording

- In-person / Remote

- Zoom (e.g., COVID), online studies

Experimental Variables

- Independent variable: the condition the researcher controls.

- Dependent variable: the outcome we are measuring.

- Independent vars in HCI: different interfaces, input devices, software, colours, computer type

- Dependent vars in HCI: efficiency, accuracy, subjective satisfaction, ease of learning, physical/cognitive demands

Variables shape your study

- Tasks: completing specific tasks, or freely using a technology?

- Interfaces: just using one interface, or comparing two (or more!) different styles

Hypothesis Testing

E.g.:

A blue backround in the user interface leads to faster task completion.

- Examine the relationship between variables (independent vs. dependent)

- Null and alternative hypotheses guide testing

- Careful experimental design is essential

Hypotheses must be falsifiable and can only be dismissed! (A bit different from the more general “research questions”).

To dismiss or support a hypothesis we generally need significance testing and quantitative methods.

Experiment Design

Which participants test which conditions?

- Different-participant design: each participant sees one condition.

- Same-participant design: everybody sees each condition.

- Matched-participant design: matched groups of participants with a shared trait put into each group

- Balanced Ordering is important to counter learning effects.

- Design choices affects validity and reliability

- Data collection: think back to week 4 lecture, but often includes task performance, completion time, errors, subjective satisfaction etc.

Table of Experimental Designs

| Design | Advantages | Disadvantages |

|---|---|---|

| Different participants (between-participants design) | - No order effects | - Requires many participants - Individual differences can affect results - Random assignment helps minimize differences |

| Same participants (within-participants design) | - Eliminates individual differences between conditions | - Requires counterbalancing - Risk of order effects (e.g., learning or fatigue) |

| Matched participants (pair-wise design) | - No order effects - Reduces impact of individual differences |

- Time-consuming to find matched pairs - May miss other influential variables |

In-the-Wild Studies

- Natural setting, minimal control over participants

- reflecting real-world use unpredictable and complex

- Ethical and practical challenges are greater, e.g., participant consent, privacy, equipment issues, and environmental factors.

Reveal insights about actual use and long-term integration that lab studies often miss.

People are complicated

HCI is hard. To do a study, you usually need to:

- Plan the study

- Find participants

- Manage communication with them

- Figure out what to do if they don’t show up

- Managing a study requires some social skills! It’s hard work!

Is there any way to do evaluation without users?

Evaluation by Inspection

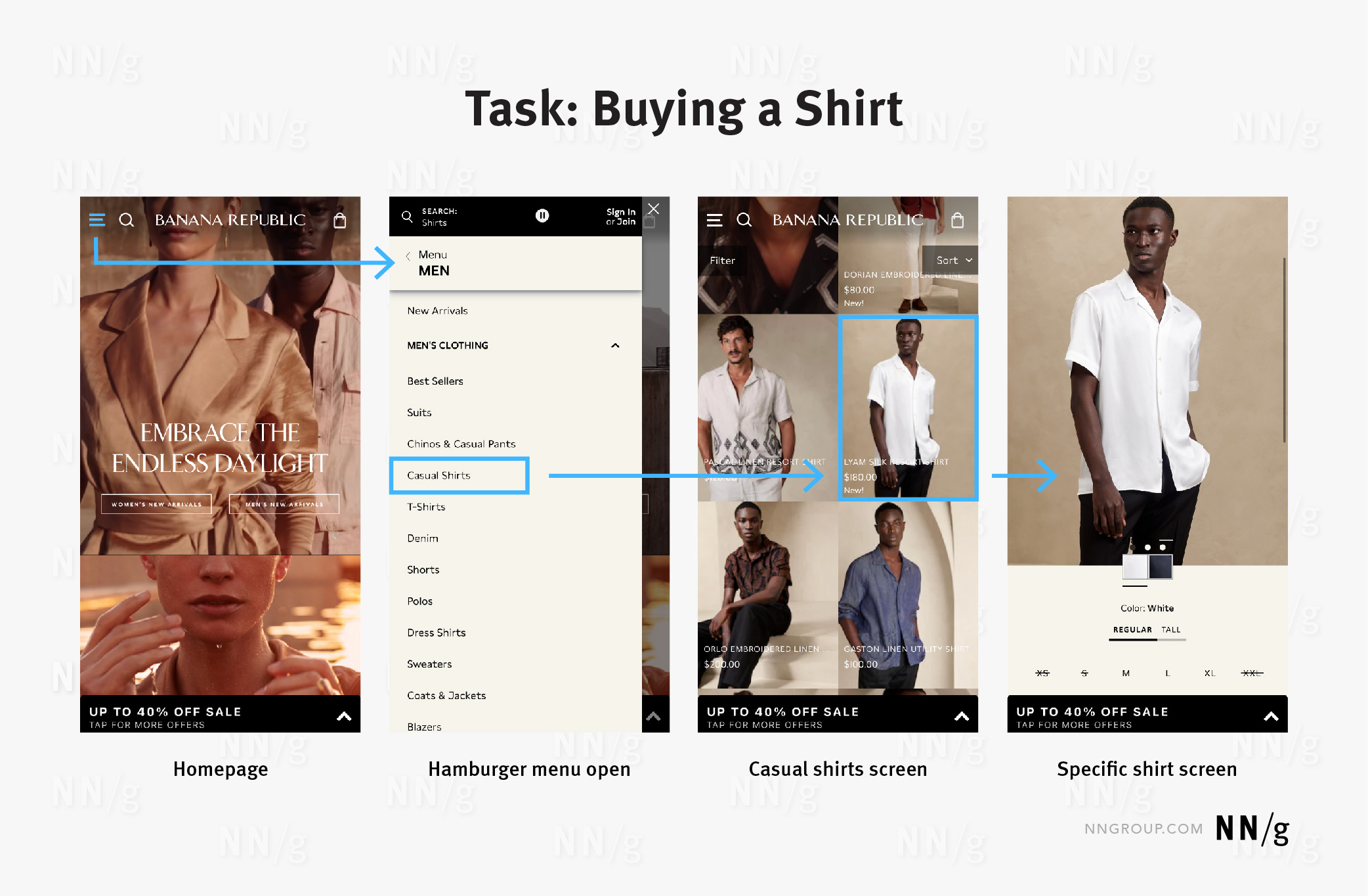

Expert Evaluation

- Conducted by designers and design “experts” rather than with end users

- Inspection methods – expert role plays user

- Heuristic evaluation: Researchers evaluate whether aspects design adhere to established usability principles (see over)

- Cognitive walkthroughs: Simulating user reasoning and problem solving at each step in an interaction sequence (evidence, availability, accessibility of correct action)

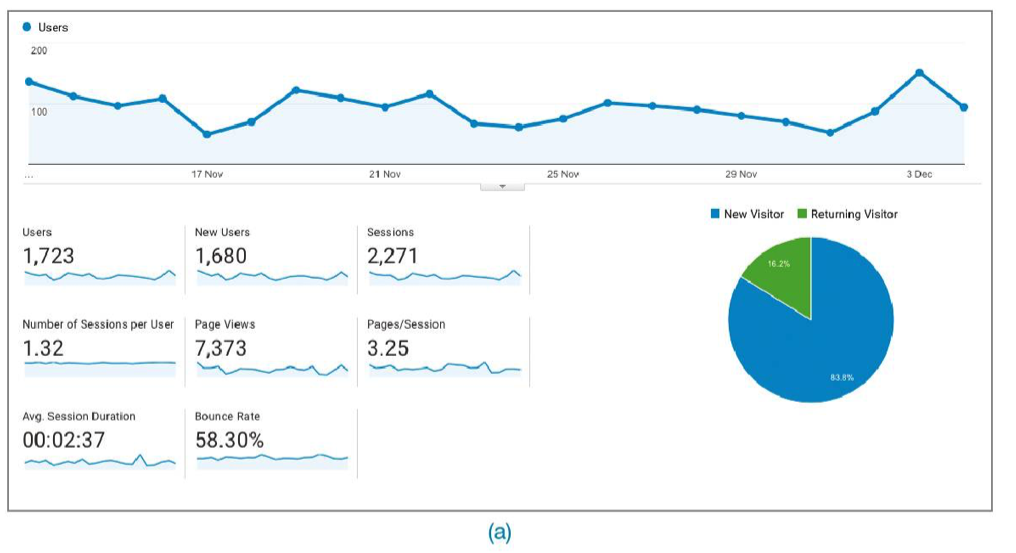

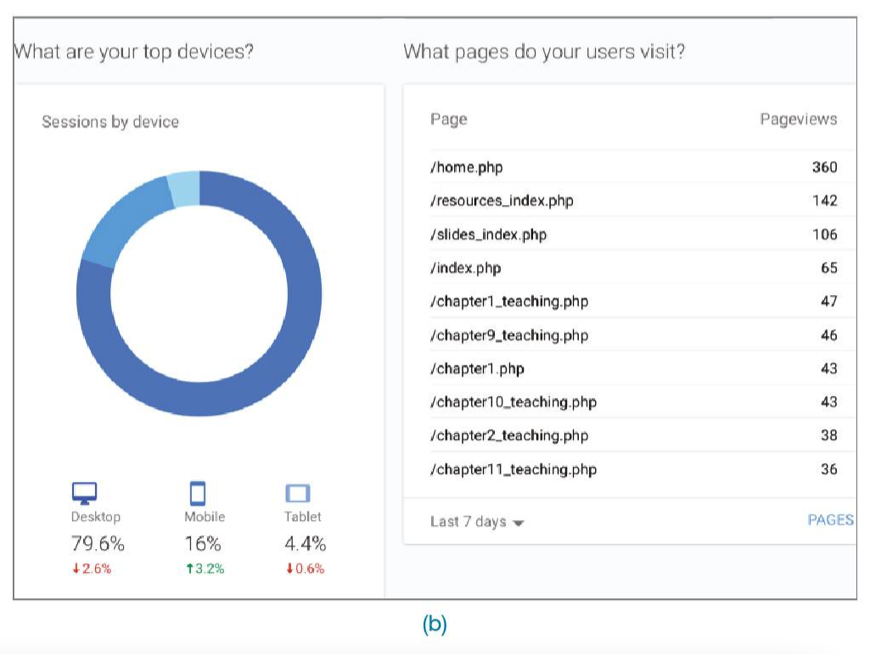

- Analytics: Understanding user demographics and tracing activities (e.g., number of clicks, duration of sessions etc.)

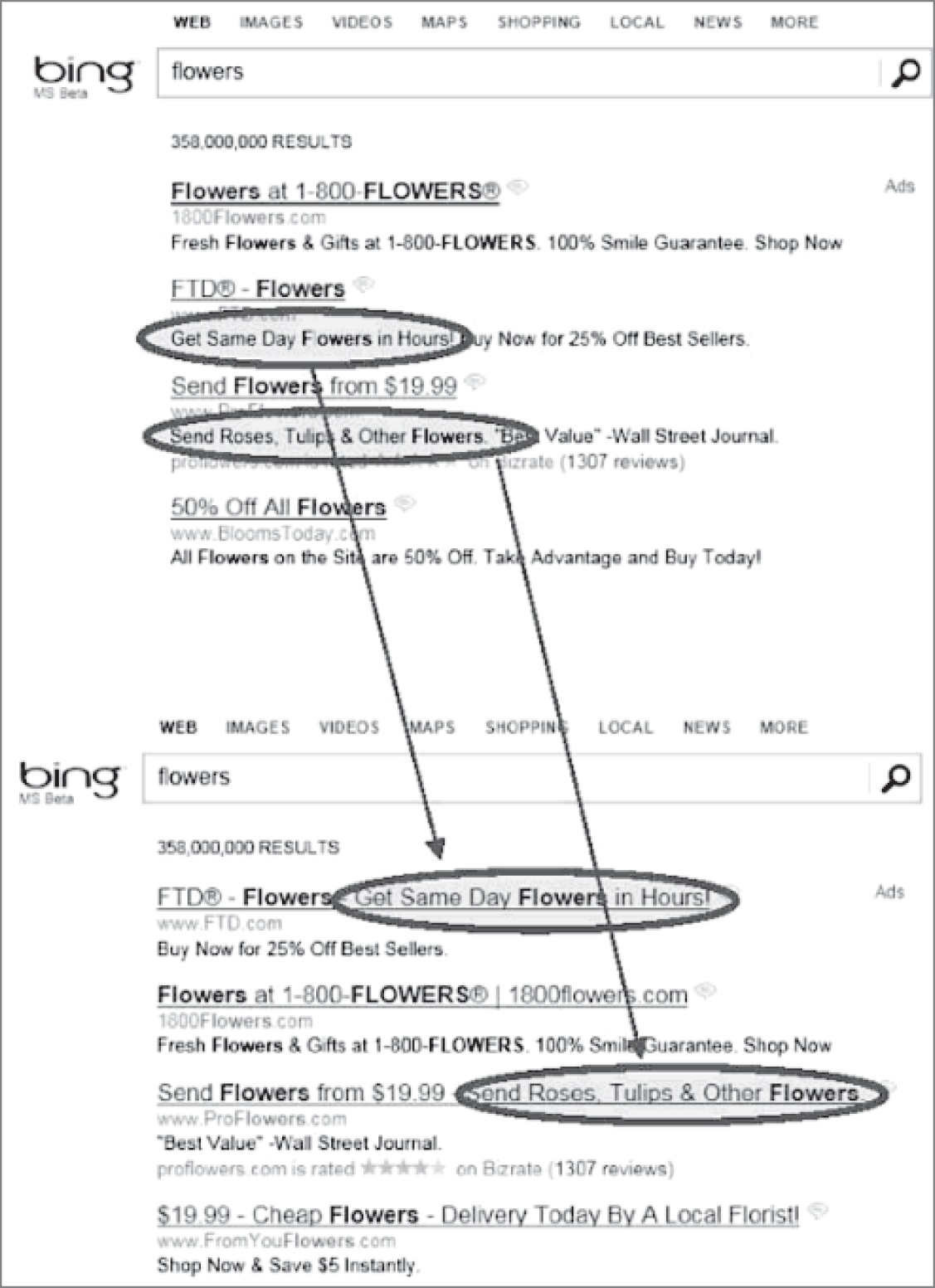

- A/B Testing: Large number of users assigned Design A or B and compare use to test “variable of interest” (e.g., number of clicks on advertising during test period)

Heuristic Evaluations of User Interfaces (video)

Nielsen’s 10 Usability Heuristics

- Visibility of system status: keep the user informed

- Match between system and real world: system uses language and communication familiar to the user, information is natural and logical

- User control and freedom: users make mistakes, there should be “emergency exits” to cancel and return quickly

- Consistency and standards: users should not wonder whether words, situations or actions mean the same thing, follow conventions

- Error prevention: eliminate error-prone conditions, or check with user before they occur

- Recognition rather than recall: make elements, actions, and options visible

- Flexibility and efficienty of use: shortcuts to speed up for experts, allow tailored experiences

- Aesthetic and minimal design: less is more, no unnecessary information

- Help users recognise, diagnose and recover from errors: error messages need plain language, and suggest solutions

- Help and documentation: best if explanation is not needed, if it is, make it good

Web Design Heuristics

Budd (2007) introduces further heuristics focussed on web, here’s some from the list:

- Clarity: Make the system as clear, concise and meaningful as possible for the intended audience.

- Minimise unneccessary complexity and cognitive load: Make the system as simple as possible for people to accomplish their tasks.

- Provide context: Interfaces should provide people with a sense of context in time and space

- Promote a pleasurable and positive experience: people should be treated with respect and the design should be aesthetically pleasing and promote a pleasurable and rewarding experience

Shneiderman’s Eight Golden Rules of Design

- Strive for consistency

- Seek universal usability

- Offer informative feedback

- Design dialogs to yield closure

- Prevent errors

- Permit easy reversal of actions

- Keep users in control

- Reduce short-term memory load

Analytics: Evaluation at Scale

A/B Testing

- Large-scale, online controlled experiment used to compare two designs (A = control, B = new design) by measuring user behavior (e.g., click rates), often without users knowing they are part of a study.

- Between-participants design, randomly assigning users to different versions and analyzing outcomes statistically to determine if observed differences are due to the design and not chance.

- Proper setup is critical — running an A/A test first ensures the testing infrastructure is sound, and careful design is needed to avoid misleading results, as shown in real-world examples like Microsoft Office 2007.

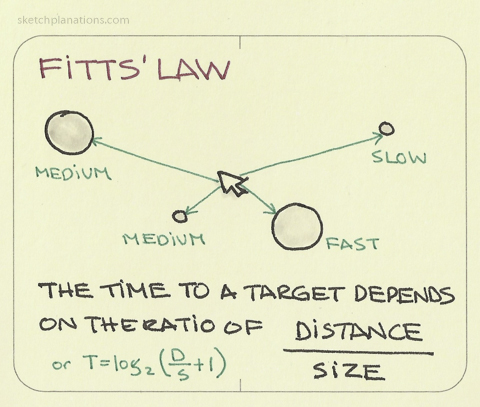

Predictive Models

Estimate user performance without needing real users, using formulas to assess task efficiency — useful in early design stages or when testing with users is difficult.

Fitts’ Law (Fitts, 1954):

- predicts how long it takes to point at a target based on its size and distance

- helps designers optimize button placement, size, and spacing on screens and devices.

- applications: input methods (e.g., touch, gaze, tilt), mobile and VR, simulating interactions for users with motor impairments

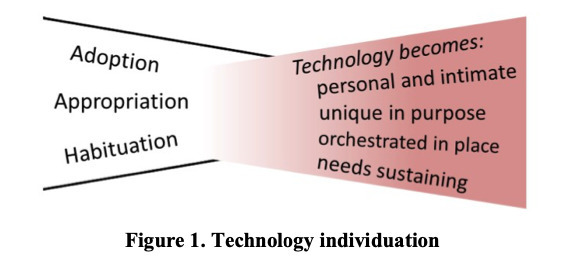

Evaluation after deployment: adoption, use, and non-use

- Adoption/Appropriation/Design-in-use (Ehn, 2008)

- Technology acceptance (Davis, 1989)

- Non-use (Satchell & Dourish, 2009)

- Technology habitation (Soro et al., 2016)

- Technology individuation (Ambe et al., 2017)

Questions: Who has a question?

Who has a question?

- I can take cathchbox question up until 2:55

- For after class questions: meet me outside the classroom at the bar (for 30 minutes)

- Feel free to ask about any aspect of the course

- Also feel free to ask about any aspect of computing at ANU! I may not be able to help, but I can listen.